Critical assessment of current practices around the use of the standard penetration test

ABSTRACT

The Standard Penetration Test (SPT) is used worldwide and is perhaps the most common in situ soil test. A recent paper published in the June 2022 edition of Geomechanics News highlighted the use of the test in current New Zealand practice. This paper explores the flaws and limitations of the SPT and asked the question; should the geotechnical profession continue with this outdated crude test when more sophisticated testing is now readily available?

1. INTRODUCTION

I have been prompted to write this article as a response to a paper published in the June 2022 issue of Geomechanics News by Ruth van Dam. This paper is aimed to continue the conversation about the use of SPT in modern day geotechnical engineering that I think needs to be had. I present this in a somewhat tongue-in-cheek manner, but there is a serious underlying message: Should the geotechnical profession continue its obsession with this crude, outdated, inaccurate, expensive test?

In her paper, van Dam, [unintentionally, I think] has illustrated just how useless the SPT is, with many examples of how you can’t rely on the test results in almost all the applications she mentions. This is not criticism of her paper; in fact the paper is well written and nicely illustrates the SPT’s current use and limitations. My response is a criticism of the test and its continued use despite its obvious uselessness. The title of van Dam’s paper was so good that I have copied it as the name of this article, except with the important addition of the word “critical” at the start of the title.

2. HOMO INCORRECTUS

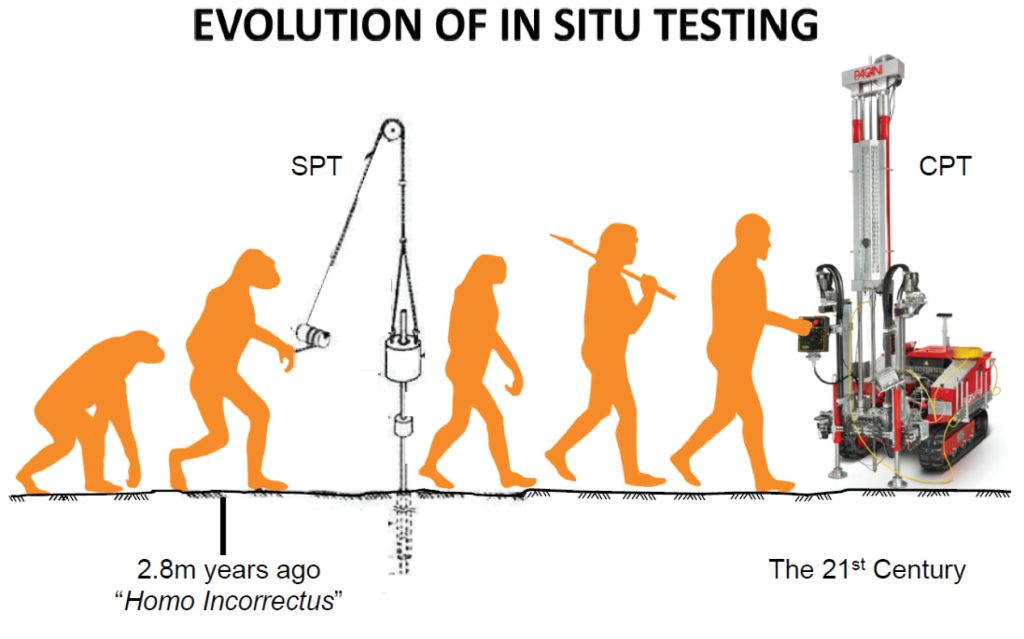

The evolution of in situ testing is illustrated in Figure 1. Archaeologists have discovered that the SPT was first used by the early ancestors of man around 2.8 million years ago. Anthropologists have named this human ancestor as “Homo Incorrectus”….. OK, so this obviously isn’t true, however, it is believed that man first started using rocks as tools around this time (i.e. hitting things with hammers).

Figure 1: Evolution of in situ testing: SPT to CPT

3. HOW LONG IS A PIECE OF STRING?

It will come as no surprise to anyone that there is a lot of uncertainty with the SPT test. This was highlighted by van Dam (2022) and there is a lot of other published information on this subject. In a general summary, here are some sources of uncertainty:

- Energy efficiency of the hammer

- Disturbance due to drilling

- Imbalance between groundwater and water in the borehole

- Borehole diameter

- Weight of the rod string and hammer

- Rod type, size, straightness and condition

- Overburden effects

So, there are a lot of areas of uncertainty, and this is obviously a problem with the SPT. Not only is there a lot of uncertainty, but there is also uncertainty in the uncertainty. This is perhaps the biggest problem with SPT. Although attempts have been made to correct for some of these uncertainties, there is no way of quantifying these uncertainties. The user of the SPT

data has no way of knowing how big or small the errors are in the test. It is the proverbial ‘how long is a piece of string?’

In comparison, errors in the CPT test are mostly focussed around the consistency of the strain gauge load cells that record the data. This is controlled by calibration and zero load readings before and after the test. These provide a quantitative measure of the errors in the testing equipment. Testing to ISO 22476-1 requires the errors to be within certain limits depending on application class. By testing to this standard, the error in the cone resistance (qc) is required to be less than 5% of the measured value. This is usually easily achieved, and errors are often well less than 5%.

There is already enough natural uncertainty associated with the engineering properties of the ground. We don’t want additional unknown uncertainty in a test that is trying to measure those ground properties.

4. LIPSTICK ON A PIG

One of the biggest potential errors in SPT is related to the energy efficiency of the hammer. Safety hammers or automatic trip hammers are commonly used in NZ and these provide the best possible energy efficiency. However, despite that, energy efficiencies of these hammers are around 80%. At first glance, that seems pretty good, but when you think about it, this is a heavy weight (63.5 kg) pretty much free falling over a relatively short distance (760mm). How is it that it loses 20% or so of its potential energy? Most of the energy loss is via air resistance, heat, noise and losses due to compression/vibration through the rod string. But there are also many small factors that can add up to large energy losses. Each time the hammer is dropped, these factors are slightly different and result in different energy losses. Slight differences in the air resistance, the resistance of the guide rod, the wind velocity, the angle of the hammer to vertical, the way the hammer strikes the anvil, the air temperature, etc will all provide variation to the hammer efficiency. These factors may be different each time the hammer is lifted and dropped. Even in the relatively controlled environment of a calibration, the efficiency of each blow may vary by up to around 5%, and much greater if the hammer is in poor condition.

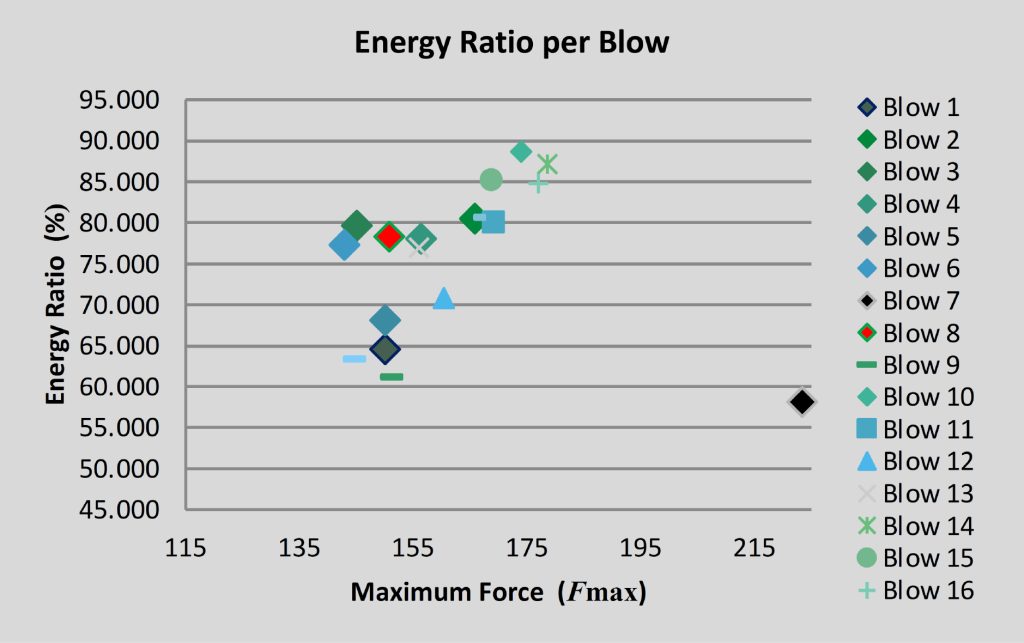

Figure 2 shows the measured energy efficiency of 16 successive blows of a poorly maintained donut hammer. Here the energy efficiency varies between 58% and 89%. Granted that this is from a poorly maintained hammer and more of an extreme case, but it illustrates the point that the energy efficiency can vary significantly from blow to blow.

Figure 2: Efficiency of successive blows of a poorly maintained hammer (Reading 2020)

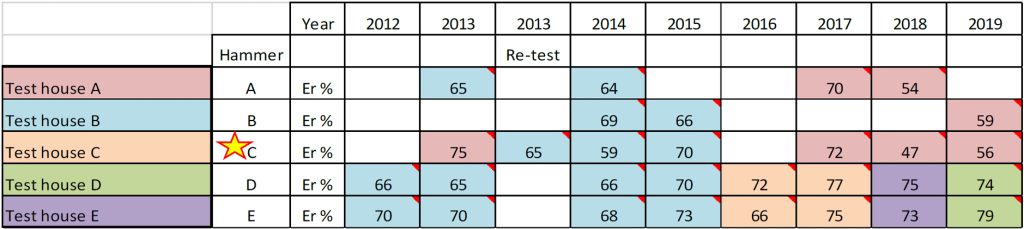

If you have done a calibration on a hammer and return the next day to the same location with the same equipment and repeat the calibration at the same depth, you may find that the energy efficiency is then different. Table 1 below shows the annual calibration record of a UK company’s SPT hammers (A to E) over an eight year period. This shows that successive annual calibrations of a particular hammer can show significant differences in energy efficiency. In one year, the same hammer was calibrated twice with a 10% difference in efficiency. That same hammer showed variations in annual calibration efficiencies of between 47% and 75% over the years.

Table 1: Annual calibration results of SPT hammers of a UK company (Wagstaff 2020)

It is obviously good practice to calibrate the hammer, but don’t be fooled into believing that the test has magically become super accurate because the energy efficiency has been measured (or ‘calibrated’). The energy efficiency varies for every blow and there is no way of being certain as to what the variation in energy efficiency is. The uncertainty of the uncertainty remains. The only way to know with more certainty is to do energy measurements for each blow of each test, which would not be practicable. Even if you did do that, the other uncertainties that are inherent with the test are still present. The energy calibration is simply lipstick on a pig.

5. WILL THE REAL STANDARD ENERGY EFFICIENCY PLEASE STAND UP?

The ‘standard’ energy efficiency correction to 60% (N60) is an attempt to utilise existing database information and correlations from historic data that had unknown energy efficiencies. It has become accepted that 60% is the ‘standard’ to correct to, as it is considered that the SPT data in the historic database was from equipment that would have been around 60% efficient. But the actual efficiency within the database is not known and is disputed by some. Kovacs, et al. (1983) believe the actual value should be closer to 55% and, Bowles (1976) that it should be 70%. So, again there is some uncertainty in this when it comes to applying historic correlations.

6. HOW MANY FINGERS AM I HOLDING UP?

The SPT test involves counting in your head the number of blows of the hammer. It is not uncommon that two people observing the same test arrive at different values for the number of blows. In that situation you must choose one value, which might be the wrong one, or both might be wrong. Again, just another uncertainty to add to the list.

7. COLLATERAL DAMAGE

By doing an SPT test after every run, you are effectively destroying around one third of the borehole core. If no SPT’s are performed, the driller can focus on the borehole’s primary function, i.e. to obtain good quality core. Avoid the collateral damage by not doing SPT tests and get better core.

8. CHEAP AT TWICE THE PRICE?

The SPT is often described as being a ‘cheap’ test. Some would say that if you are doing a borehole anyway, you might as well do SPTs while you’re at it. The implication is that there is little added cost. Wrong: SPT’s take time and there is cost associated with the equipment purchase, maintenance and calibration. A drilling contractor would normally charge around $65 for each SPT test. In a 15m deep borehole with SPT’s after every run, that equates to $650. This is about what a CPT test would cost for the same depth. The SPT’s in that 15m borehole are giving you 10 data points with depth, whereas the CPT will provide typically 4,500 data points. That’s less than 20 cents per data point in the CPT compared to $65 for the SPT. The colloquial saying is ‘cheap at twice the price’. But the CPT is cheaper at 300 times the price. In this respect, the SPT is not a cheap test at all.

9. ROUGH AS GUTS

Drillers have had a reputation in the past of being ‘rough as guts’. However, drilling contractors in NZ nowadays use modern sophisticated rigs and sampling methods such as wireline triple tube coring or sonic drilling techniques. These contractors are professionals that strive to provide good quality work. The NZ drilling industry can be considered ahead of many other countries in this respect (think shell and auger drilling in the UK). The SPT test is somewhat at odds with modern sophisticated drilling methods. It is not the drillers that are rough as guts, it is the SPT test. I am sure most drillers would be happy to ditch SPT and concentrate their efforts on getting good core recovery.

10. HIT AND MISS

The SPT is a discrete test, typically done at 1.5m intervals between borehole core runs. As such there is a whole bunch of soil that is not tested, and furthermore, the test is done over a total of 450 mm and so evens out the ground properties over the test depth. Consequently, the test lacks definition and can miss out on important information between tests. Thin layers of softer or harder material can easily be missed but could prove to be critical information. Given that the test only covers at best one third of the total borehole depth, the test is definitely more ‘miss’ than ‘hit’.

The CPT, on the other hand, provides continuous information with depth, so it is entirely ‘hit’ and no ‘miss’.

11. CORRELATION STREET

Correlations between SPT and soil parameters are generally unreliable. This is partly due to the unreliability and unrepeatability of the test itself, but also to a limitation between the singular value test and complexities of soil behaviour. And the correlations are purely empirical.

The CPT, on the other hand, has many reliable correlations, usually with a strong basis in theory. The test commonly measures three values (qc, fs and u2). The combination of these three values allows for more complex correlations to be possible and be conditionally applicable to different soil types. The huge growing database of information that is possible from the highly data rich CPT means that correlations to soil parameters are constantly improving.

The CPT can also be correlated to SPT. Jefferies and Davies (1993) suggest that their correlation from CPT to SPT can provide better estimates of N60 than the SPT test itself. So, if (for some reason) you do want SPT N values, then you are better off doing a CPT and then correlating to N60. At least then you will also get a continuous profile of estimated SPT N values.

12. IS IT A ROCKY TEST?

SPT is an in situ test for soil, but it is often used in very weak rocks. For example, it is standard practice to use SPT in Auckland’s Waitemata Group weathering profile to identify the ‘top of rock’. This being when SPT N > 50. However, this indicator is not reliable in that it does not necessarily relate directly to material that may be considered rock (e.g. from hand description or UCS testing). That is, N > 50 isn’t always rock and N < 50 can sometimes be rock. But let’s assume that N > 50 is rock, what do you then do with that information? How do you use a number that is something greater than 50 in design? It may provide a rough indicator of ‘top of rock’ but is useless as a design input value. A designer (of say pile foundations) would have to assume (read ‘guess’) what the strength capacity of that ‘rock’ is. Not a very elegant design process.

Fortunately, the CPT is a test that can be done in very weak rocks. For example, in the Waitemata Group, the CPT can often be pushed several metres beyond what SPT may show as N > 50. With this comes a continuous cone resistance value that can actually be used directly in design (for pile design, for example).

The SPT is indeed a ‘rocky’ test, but only in the sense that it is unreliable. Not as a test for rock.

13. CONCLUSION

SPT is not a reliable test. There are many areas of uncertainty associated with the test, almost all of which are unquantifiable; there is uncertainty in the uncertainty. Furthermore, correlations to soil parameters are questionable. It’s not even cheap in comparison to the amount of information you get from it. Why then does the geotechnical professional continue to hold on to this costly, unreliable, (pretty much) useless test? With the large availability of CPT in NZ, why even bother doing SPT. If you want to do a borehole, then use that borehole to concentrate on getting good core recovery and forget SPT. The money you save in not doing SPT tests can be used to do a CPT next to the borehole (preferably, CPT first). That way you get more reliable, continuous data that you can even correlate back to SPT N60 if that’s what you want.

In the author’s opinion, the geotechnical profession is stuck in a rut; holding on to outdated tests and theories that we know aren’t reliable but still can’t seem to move on from. Let’s let go of SPT’s, Scala penetrometers, hand auger boreholes, etc. Let’s stop hitting things with hammers and embrace the ‘new’ in situ technologies of CPT, DMT and geophysics which are all currently available for you to use from many contractors throughout NZ.

References

Bowles, J. E., 1996. “Foundation Analysis and Design” The McGraw-Hill Companies, Inc. Fifth edition,.

Jefferies, M.G. and Davies, K., 1993. “Use of CPTU to estimate equivalent SPT N60,” Geotechnical Testing Journal, ASTM, 16(4): 458-468.

Kovacs, W. D., Salomone, L. A., and Yokal, F. Y., 1983. “Comparison of Energy Measurements in the Standard Penetration Test Using the Cathead and Rope Method,” National Bureau of Standards Report to the US Nuclear Regulatory Commission.

Reading, P., 2020. “The Standard Penetration Test: It’s Origin, Evolution and Future,” AGS webinar, 15th Sept. 2020.

van Dam, R.I.C., 2022. “Assessment of Current Practices Around the Use of the Standard Penetration Test,” NZ Geomechanics News, Issue 103, June 2022: 34-39

Wagstaff, S., 2020. “The Standard Penetration Test: It’s Origin, Evolution and Future,” AGS webinar, 15th Sept. 2020.